The Invisible Assembly Line: Why AI Articles Aren’t ‘Written’ the Way You Think

Most readers picture a single model conjuring paragraphs on demand. The truth is messier and more industrial: AI content is assembled on an invisible assembly line of micro-processes. A generation pipeline typically includes prompt orchestration, retrieval of relevant documents, modular micro-prompts for sections (headline, intro, body, CTA), coherence post-processing, quality filters and SEO scoring. Each stage can be run by separate specialised models or scripts. That means the final article is less a single creative act and more the product of many specialised tools stitched together — some running in real time, others precomputing facts or scoring readability. Understanding this explains why some AI articles feel uncanny: they’re composites rather than monologues, and seams can show when modules disagree.

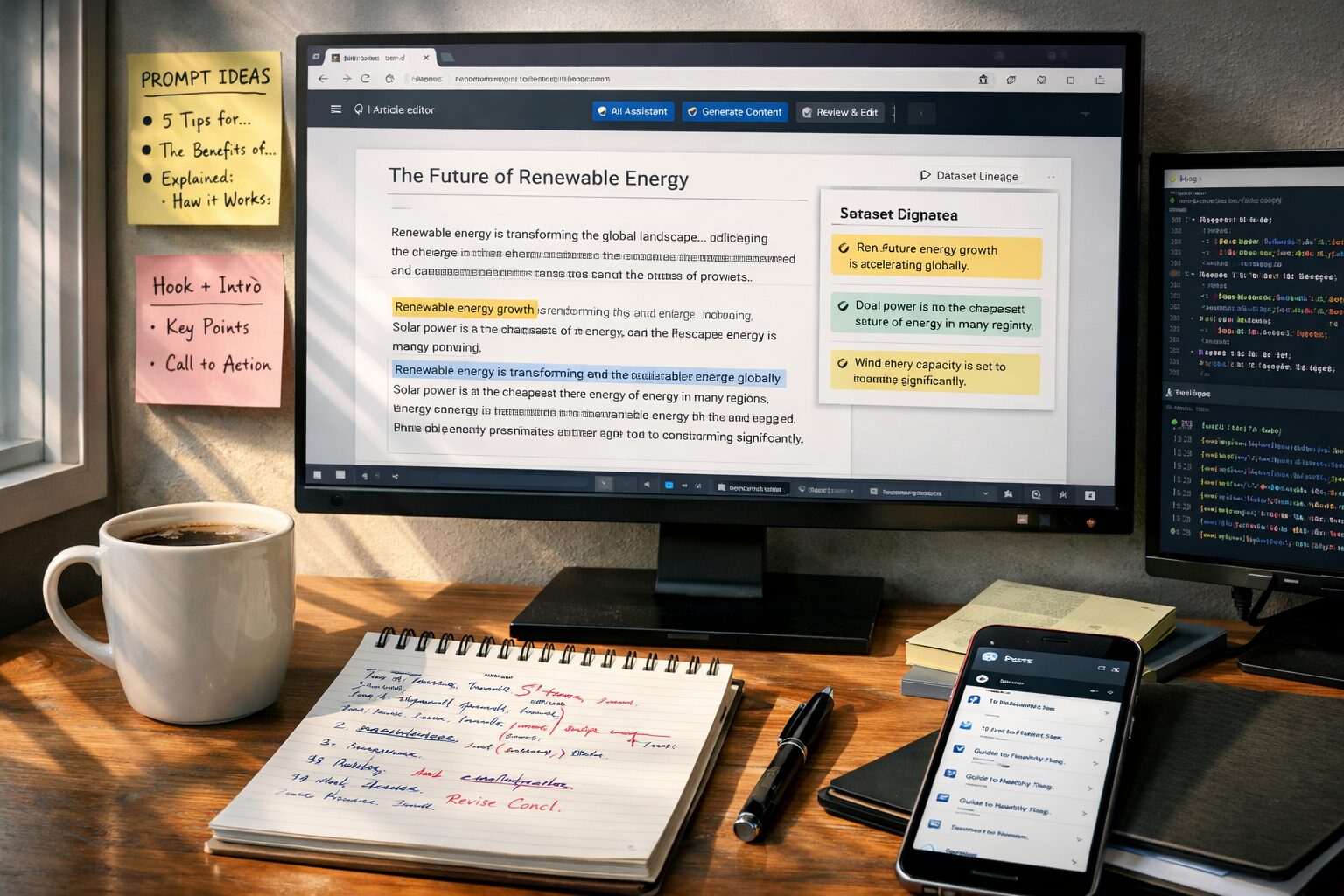

Data Lineage: The Sources Nobody Talks About (and Why They Matter)

People assume AI content is trained on ‘the internet’ generically. In practice, lineages — the exact sets of data and timestamps used for retrieval or fine-tuning — radically change output. Models may consult scraped web pages, public datasets, internal proprietary knowledge bases, or cached snapshots. When an AI cites a stat or claims an event, that assertion is often traced back to a retrieval index rather than raw ‘knowledge’. This is why platform-specific connectors matter: a system wired into up-to-date news feeds or an enterprise CMS will write differently from one relying on static snapshots. That nuance is crucial for credibility, and it’s why services that integrate directly with WordPress or HubSpot (for example, autoarticle.net) can produce articles that better reflect a company’s live content and tone.

Prompt Engineering: The New Editorial Desk

Prompt engineering is the hidden craft behind persuasive AI output. Think of prompts as editorial briefs: they define audience, tone, word count, required subtopics and forbidden claims. Skilled teams maintain prompt libraries — tested templates that act like style guides. Beyond basic prompts, systems use dynamic prompts that adapt in-flight: if a fact-checker flag triggers, subsequent prompts shift focus to verification and sourcing. Treating prompts as code means editors are now developers of voice; they version prompts, A/B test them and roll back when a pattern of weak performance emerges. This hybrid editorial/engineering role is one reason some companies have built internal prompt governance rather than relying solely on the model provider.

Post-Generation Curation: Where Human and Machine Really Meet

Contrary to the myth of pure automation, high-quality AI content usually passes through human curation. Editors correct nuance, patch hallucinations, ensure legal compliance and tune calls to action. The human role is less about writing every sentence and more about quality assurance, framing and amplification strategy. Many platforms implement a two-stage workflow: the model generates a first draft, humans refine it, and the system reheats the copy to ensure SEO and brand voice remain consistent after edits. That loop—machine draft, human edit, machine refine—creates a final product that combines speed with reliability.

Delivery and Integration: From Model Output to Live Post

Publication is often the least visible engineering challenge. Delivering AI content into CMSs like WordPress or HubSpot requires connectors, formatters and scheduling logic. Rich text, images, metadata, internal links and taxonomy tags must be mapped correctly; otherwise the article will appear broken or poorly optimised. Services such as autoarticle.net focus on these plumbing details, offering automatic generation directly into WordPress and HubSpot blogs so that the output retains structure, metadata and SEO attributes without manual copy‑and‑paste. Behind the scenes, webhooks, API keys, sanitisation filters and role-based approval workflows determine whether content is posted instantly, queued for review or enriched with dynamic widgets like related posts.

Why Hallucinations Happen — And What Practitioners Do About Them

Hallucinations are not random bugs but predictable failure modes: models will confidently invent specifics when retrieval fails or when the prompt rewards fluency over fidelity. The practical fixes are systemic: integrate fact-check retrieval, apply contradiction detectors, enforce citation rules, and include human verification gates for claims above a confidence threshold. For transactional or regulated content, many teams lock models into deterministic templates or require exact-source quoting to avoid legal risk. Knowing these mitigation patterns helps distinguish between reckless automation and mature AI content programmes.

Economics and Ethics: The Trade-offs Most Readers Don’t See

Finally, producing AI content involves trade-offs that rarely make it into consumer narratives. Faster output and lower marginal cost can erode editorial jobs, but they also enable personalised content at scale — product pages, customised newsletters and microcopy adapted per user. Organisations must balance speed, accuracy, brand integrity and staff impact. Ethical programmes include provenance labels (disclosing AI assistance), audit trails for content lineage, and clear escalation paths when model output conflicts with policy. Companies that invest in these safeguards pay higher upfront costs but gain trust and long-term value.