Why the question matters: context from the field

Auto‑generated WordPress posts are no longer a niche experiment; they sit at the intersection of content strategy, search engineering and editorial ethics. For many organisations the promise is simple: scale content production without hiring an army of writers. Experts in SEO and newsroom technology, however, frame the technology differently — not as a silver bullet, but as an operational multiplier that requires governance.

Industry consultants emphasise that the decision to auto‑generate content should be strategic, not tactical. That means defining clear goals (lead generation, topical coverage, internal knowledge base), measuring against hard metrics (engagement, dwell time, conversions) and building feedback loops so human editors can correct and refine the output. In other words: automation augments capacity, it does not replace editorial judgment.

What actual professionals say — themes from interviews and panels

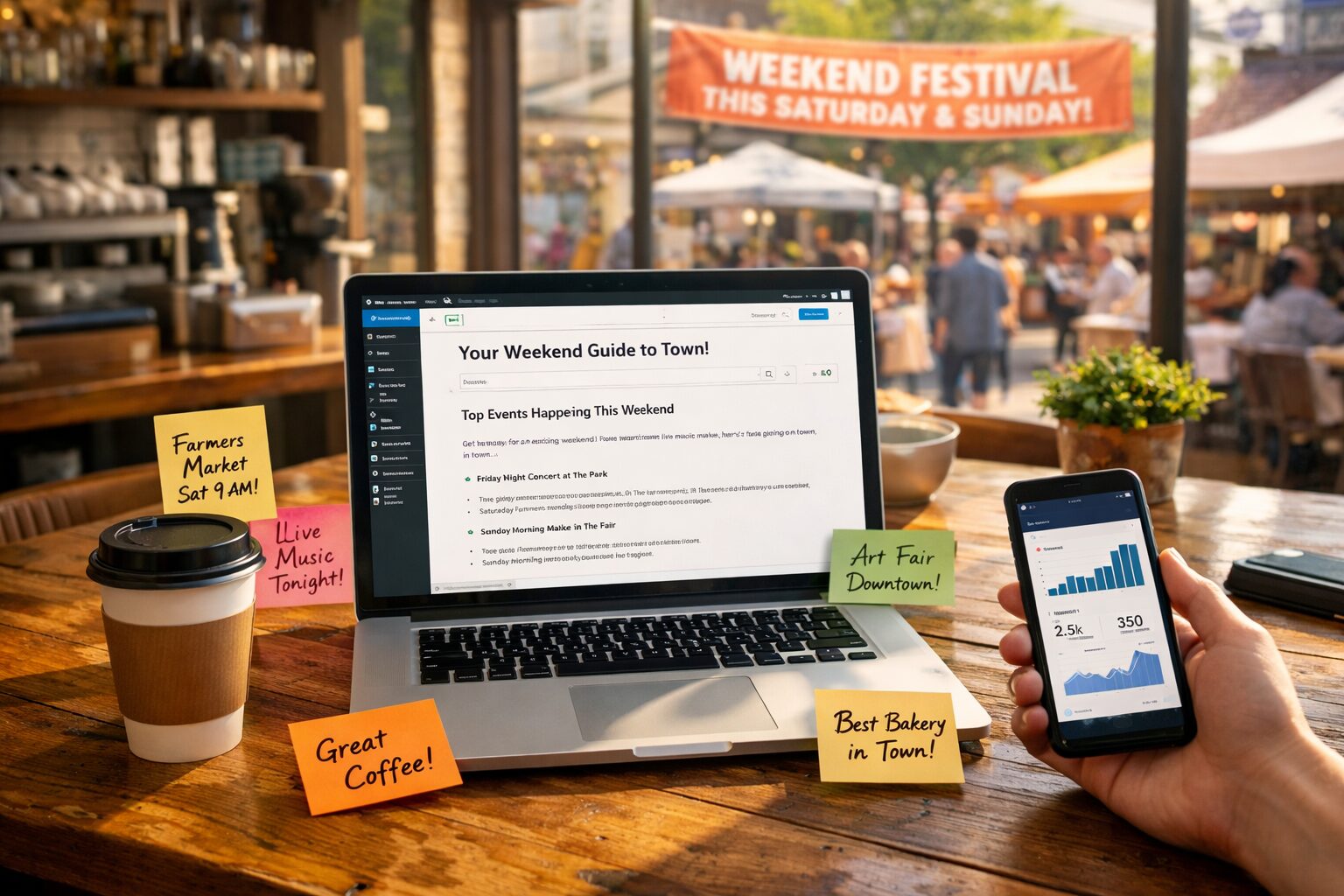

Senior editors and technical leads who have adopted automated article tools tend to repeat three main observations. First, quality controls matter more than the generation method. One managing editor told panels that a single poorly framed auto‑generated post can lose the trust of a niche audience far faster than ten well curated posts can earn it. Second, context beats quantity: experts prefer automation for predictable, data‑driven formats (product descriptions, event summaries, FAQ expansions) and reserve bespoke reporting for nuanced topics.

Third, integration is decisive. Developers and CMS architects stress the importance of seamless WordPress workflows — from content staging to taxonomy tagging and scheduled publishing. Services such as autoarticle.net are cited in conversations not because they promise perfection but because they lower the engineering lift: auto‑generation, basic SEO hooks and CMS connectors bundled together let teams pilot workflows before committing significant resource.

Surprising cautions: the experts’ quiet worries

The headline fears — spammy outputs or mass‑produced low‑value pages — are familiar. Less discussed but frequently raised in closed forums are subtler risks. Legal and compliance officers worry about attribution, inadvertent libel, and the replication of copyrighted phrasings across millions of generated posts. Brand strategists highlight tonal drift: machine output can gradually erode a distinct voice if not periodically recalibrated by brand custodians.

Data privacy engineers also flag a technical concern: models trained on sensitive internal documents can leak patterns if prompts and training workflows are not properly segregated. Professionals therefore advocate for provenance logging — keeping an auditable trail showing where content originated, who reviewed it and which model or template produced it.

Practical frameworks professionals use to deploy auto‑generated posts

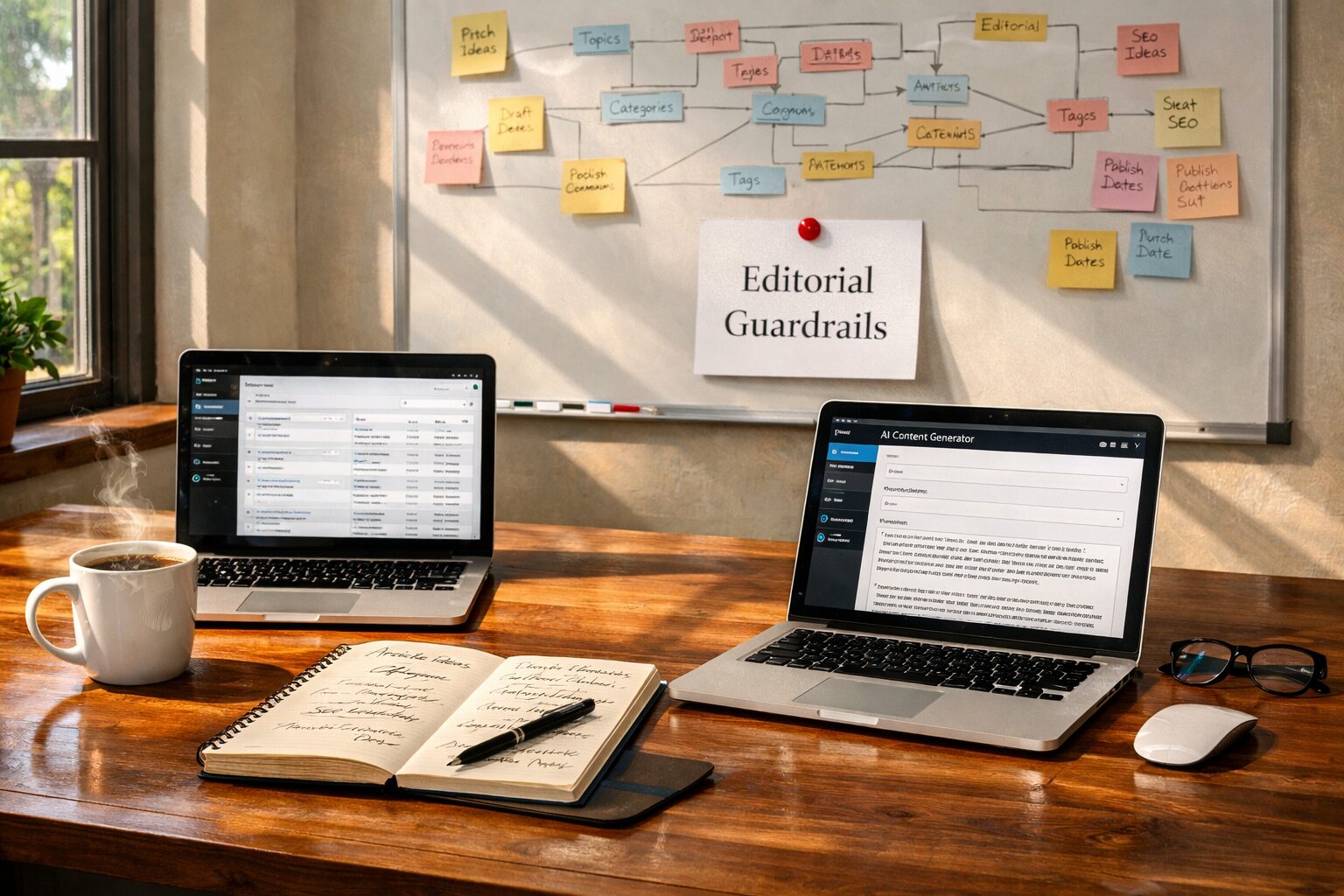

Successful teams adopt a staged approach. Phase one is ‘pilot and guardrails’ — populating non‑public sections of WordPress (staging or private categories) and running A/B tests against human‑written control groups. Phase two is ‘hybrid publishing’ — using auto‑generation for first drafts or data‑rich posts, followed by human editing for tone and accuracy. Phase three, for mature programmes, is ‘automation at scale’ — full pipelines that include automated SEO optimisation, schema markup and scheduled updates.

Experts also recommend concrete guardrails: a checklist for editorial sign‑off, a taxonomy map so AI understands tag and category logic, and routine audits (monthly quality reviews, automated anomaly detection for traffic dips). Tools like autoarticle.net are valuable in these frameworks because they provide CMS connectors and templates that accelerate the pilot phases.

The future professionals are preparing for

Experts foresee a future where auto‑generated WordPress posts are a standard part of the content toolkit, much like CMS plugins or analytics dashboards. The differentiator won’t be raw generation capability but how organisations govern, personalise and iterate on generated content. Expect tighter integration with first‑party data (customised narratives based on user segments), deeper editorial collaboration features and stronger provenance and rights management.

Finally, the most progressive teams treat automation as an opportunity to elevate human work. Rather than replacing writers, professionals increasingly design workflows where human creators focus on original reporting, creative strategy and high‑impact storytelling, while machines handle repetitive, data‑dense tasks.

Practical takeaways for content leaders

If you are considering auto‑generated posts for WordPress, start small, measure everything and build clear editorial pipelines. Prioritise formats that benefit from predictable structure, invest in QA and provenance logging, and ensure legal and brand teams are part of the rollout. Use packaged tools to reduce engineering overhead, but treat every generated article as a draft requiring human oversight.

The consensus among professionals is straightforward: automation is powerful, but its value is realised through careful integration into human workflows — not as a shortcut around thoughtful publishing practice.